You shipped the AI feature. The demo looked great. The investors were impressed. Then a customer used it in production and it did something completely unexpected, and you had no framework to explain why, or catch it before it happened.

Agentic AI reliability is one of the quietest, and most expensive, headaches in SaaS right now.

The Problem Nobody Talks About at Launch

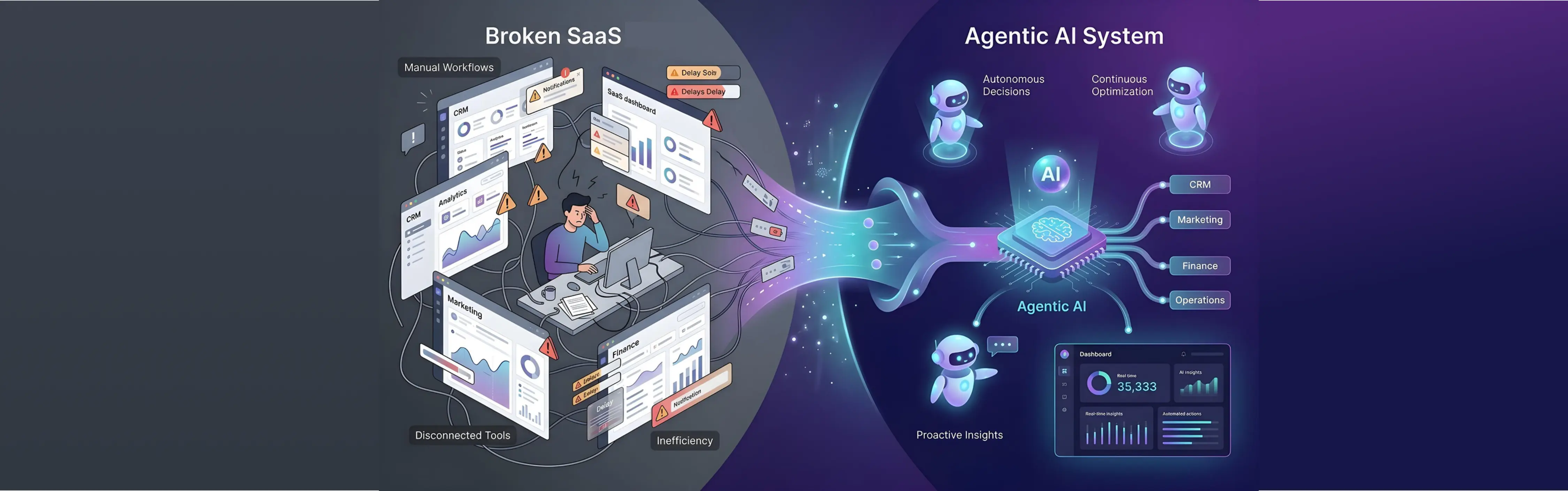

When you add a traditional software feature, it behaves the same way every single time. Click button, get result. The result is deterministic. You can test it, verify it, and ship it with confidence.

Agentic AI doesn't work like that.

Give an AI agent the same input twice and you can get two different outputs. Sometimes slightly different. Sometimes dramatically different. The agent might complete a task flawlessly on Tuesday, misinterpret it on Wednesday, and on Thursday decide to take an action you never intended it to take.

This isn't a bug in the traditional sense. It's the nature of probabilistic systems. And it's the reason agentic AI testing and evaluation is the difference between an AI feature that builds your product and one that quietly destroys it.

The uncomfortable truth for most SaaS teams is that you may have built the AI, but you haven't built the framework to know when it's going wrong.

What Agentic Actually Means (And Why It Changes Everything)

Let's use a real scenario. Say you're building an AI-powered feature for a B2B SaaS platform, something like an intelligent sales assistant for your users' sales teams.

- Basic AI version:

- A sales rep types "summarise the last three interactions with XyZCorp." The AI reads the CRM notes and returns a summary. Done. The rep reads it, decides what to do next, and takes action themselves. The AI's job ended the moment it produced text.

- Agentic AI version:

- The rep says "follow up with XyZ Corp based on where we left off." Now the AI doesn't just summarise, it reads the interaction history, infers that the last call ended with a promise to send a revised proposal, drafts that proposal using the pricing data it pulled from your internal docs, attaches the right case study based on XyZ's industry, and sends the email, all before the rep has finished their coffee.

- That's an agentic workflow. It planned steps, used tools, read and wrote data, and made judgment calls along the way. Your sales rep just got a very capable assistant who works faster than any human could.

- The difference in output between these two versions isn't subtle. One saves a rep two minutes. The other saves them two hours, and probably does the job better, because it pulled context from three systems simultaneously that the rep would have had to open manually.

- This is why agentic AI is genuinely exciting, and why serious SaaS teams are building it into their core product.

- But here's the part that requires equal seriousness: in the basic version, if the AI misread something, the rep catches it before anything happens. They're in the loop. The mistake stops at the summary.

- In the agentic version, the AI is already three steps ahead of the rep, which is the point. But it also means a misread doesn't produce a wrong answer. It produces a wrong action. An email already in XyZ's inbox. A proposal sent with the wrong pricing tier. A follow-up that references a pain point from a completely different account.

- The capability and the risk come from exactly the same place: the AI acting, not just answering.

- This is why agentic AI testing and evaluation isn't a nice-to-have, it's what separates teams shipping AI that compounds trust over time from teams shipping AI that erodes it. The goal isn't to slow the AI down. It's to make it fast and right, so your users can confidently let it run without hovering over every step.

- Done well, that's your product's biggest competitive advantage. Done without a reliability framework, it's your biggest support ticket.

The Real Cost of AI Inconsistency

1. Churn you can't explain

“Inconsistency is more damaging than being consistently wrong.”

A customer churns. Exit survey says the product wasn't reliable enough. Your team digs in and finds that the AI feature, the one you spent three quarters building, was producing inconsistent outputs for their specific use case. Not always. Not dramatically. Just enough that the team stopped trusting it.

A system that's consistently wrong can be corrected or worked around. A system that's sometimes right and sometimes wrong can't be trusted at all. Humans cannot build reliable workflows around unpredictable tools. So they abandon them.

2. Enterprise deals that don't close

Enterprise buyers have started asking a question that most SaaS teams aren't ready to answer: "How do you evaluate and monitor your AI outputs?"

If your answer is "we test before release and monitor error rates," that's not going to land well. Enterprise customers, especially in financial services, healthcare, and legal tech, need to know that you have systematic evaluation processes, that edge cases are documented, and that there's a feedback loop in place. Without that, your AI feature isn't a selling point. It's a procurement red flag. You can have the best AI in the room and still lose the deal because you can't explain how you know it works.

3. Regulatory exposure

In agentic workflows, the AI isn't just advising, it's doing. If it does the wrong thing in a regulated context, the question of accountability lands squarely on the product team.

Having a documented testing and evaluation framework at least demonstrates due diligence. Not having one demonstrates the opposite.

What Proper Agentic AI Testing Actually Looks Like

Most teams approach AI testing the same way they approach traditional software testing, which is to write some test cases, run them, check if the output looks right. This works reasonably well for simple AI features. It breaks down almost completely for agentic systems.

Here's why: in a multi-step agentic workflow, an error in step two doesn't just affect step two. It propagates. By step five, the agent is operating on a compounded misunderstanding, & the final output can look entirely reasonable while being completely

wrong.

Proper agentic AI testing and evaluation requires a different framework entirely. It has five components.

- Output consistency testing: Run the same prompt through the same agentic workflow dozens, ideally hundreds of times under varied conditions. Not to check if the answer is correct, but to measure variance. How much do outputs differ? What triggers the variance? Is it deterministic enough for your use case? You need a quantified consistency score before you ship, not a gut feeling.

- Adversarial input testing: Real users are creative. They will send your AI agent inputs it was never designed to handle, ambiguous instructions, incomplete context, contradictory requirements, unusual edge cases. Testing only with clean, well-formed inputs is testing the happy path. Adversarial testing is testing the real world.

- Tool and API failure simulation: Agentic workflows rely on chains of tools and integrations. What happens when one of them fails, returns unexpected data, or times out? Does the agent fail gracefully? Does it retry correctly? Does it communicate the failure to the user in a useful way, or does it silently produce a wrong output because it didn't know how to handle the error?

- Long-horizon task evaluation: Short tasks are easy to evaluate. Long, multi-step tasks are where reliability breaks down. Testing needs to cover the full length of your longest agentic workflows, not just isolated steps. A task that takes fifteen steps needs fifteen checkpoints, not just a pass/fail on the final output.

- Human-in-the-loop calibration: Even well-designed agentic systems need calibration points, places where a human reviews the AI's reasoning before it proceeds. Identifying where those points should be, and evaluating whether the AI is surfacing its uncertainty appropriately in those moments, is a discipline in itself.

The 5 Categories of Edge Cases You Must Stress-Test

If you're building agentic AI, these are the five categories of edge cases that will break your system in production if you don't find them first.

Why Most SaaS Teams Skip Agentic AI Evaluations

There's a structural reason this evaluation gap exists, and it's not laziness.

The pressure to ship AI features is enormous right now. Product teams are moving fast because the market is moving fast. Evaluation frameworks feel like something you build after the feature is live, once you have real data. The MVP mindset, ship it, learn from it, works well for most software. It works badly for agentic AI, where the learning can come in the form of a production incident that damages customer trust before you had a chance to catch the problem.

There's also a skills gap. Building evaluation frameworks for agentic systems requires a combination of ML engineering, QA methodology, and product thinking that most SaaS engineering teams don't have in-house yet. It's genuinely new territory. Expecting your existing QA team to cover it without specialist support is optimistic.

This is exactly the gap that a specialist agentic AI development company fills, not just in building the system, but in building the scaffolding around it that makes it safe to ship.

The Bottom Line

Agentic AI is the most powerful capability available to SaaS products right now. It's also the least understood from a reliability standpoint, and the most underestimated as a product risk.

The teams that will win, with enterprise buyers, with retention, with long-term product reputation, are not necessarily the ones who shipped AI fastest. They're the ones who built evaluation into the process early enough that they can stand behind their AI's behaviour with confidence.

Your AI feature can be your biggest competitive advantage. But without a testing and evaluation framework, it can just as easily be your biggest liability.

The gap between those two outcomes isn't talent or timing. It's a process.

.webp)

.svg)

.svg)

.avif)

Leave a Comment

Your email address will not be published. Required fields are marked *